3D City Modelling of Istanbul

Issues, Challenges and Limitations

The Turkish city of Istanbul is developing a 3D city model mainly aimed at urban planning. The data sources used so far include airborne Lidar, aerial images and 2D maps containing footprints of buildings. Everybody engaged in creating 3D models of large cities faces many issues, challenges and limitations, including excessive data storage requirements, the need for manual editing, incompleteness and other data quality problems. In this article, the authors share their experiences on creating models of the city of Istanbul at the level of detail (LOD) 2 and 3.

The core data for creating the 3D city model of Istanbul was collected by a helicopter flying at a height of 500m and a speed of 80 knots (150km/h) during surveys carried out in 2012 and 2014. The helicopter was equipped with a Q680i Lidar system from RIEGL (Austria), a DigiCam 60MP camera, an AeroControl GNSS/IMU navigation system and an IGI CCNS-5 flight management system. The Lidar point cloud was captured with an average point density of 16 points/m2. The images were recorded with a ground sampling distance (GSD) of 5cm and with 60% along-track and 30% across-track overlap. To ensure high geometric accuracy, eight GNSS base stations were used. The recording of the whole city covering 5,400km2 required thorough flight planning as the flying height and overlap determine a major part of the data quality. Added to this, data accuracy is directly affected by how good the boresights of IMU, GNSS and camera are calibrated and remain stable during the surveys.

Processing

The Lidar point clouds were geometrically corrected using RIEGL RiPROCESS and RiANALYZE. The Lidar point cloud was stored in 17,000 LAS files, each covering an area of 500m by 700m. Next these LAS files were used for generating a digital surface model (DSM), a digital elevation model (DEM) and a DSM in which the heights of buildings and other objects refer to the ground surface instead of a local or national reference system. Such a DSM is called a normalized DSM (nDSM). The GSD of the three digital terrain models was 25cm. Combining the DSM with the simultaneously recorded images enabled the creation of orthoimages. The LAS files were also used for classifying the points on the ground, on buildings and in low, medium and high vegetation using MicroStation V8i Connect, TerraSolid and TerraScan software.

Automatic classification

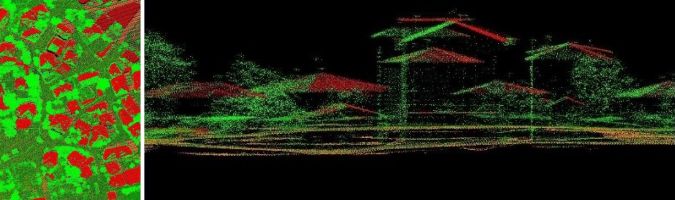

The automatic classification of points was done with 90% accuracy. Automatic classification faces severe limitations when adjacent objects clutter. For example, the wrong class was often assigned to buildings close to high trees (Figure 1). Extensive visual checks and manual editing was required to improve the quality of the classification result. Next, the classified points were combined with building footprints extracted from the 1:1,000 base map and with the orthoimages using TerraModeller software to automatically generate around 1.5 million building cubes. Cubes are a 3D representation of level of detail (LOD) 2 (see side bar). Next, the building blocks were augmented by automatically adding roofs using TerraModeller. Mosques, churches, bridges and other complex structures had to be manually mapped using ZMAP software, however. The base map was created from aerial images. Since roof outlines are mapped rather than the actual building footprints, it is not always possible to separate roofs from high trees automatically, thus again requiring extensive manual editing.

CityGML

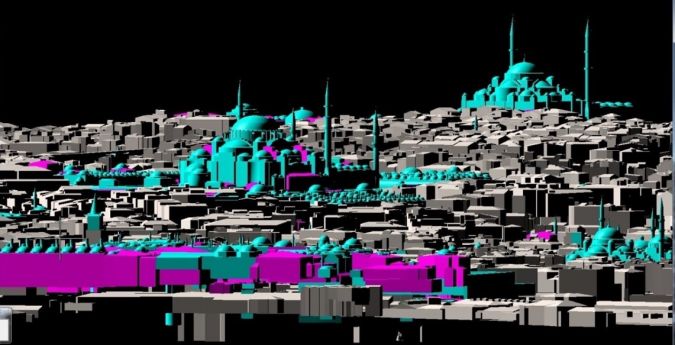

The files generated from processing Lidar point clouds, aerial images and 2D base maps were converted to CityGML using an FME Workbench. Next, topologically and semantically correct LOD 2 3D models of buildings were created – in total 1.5 million – with the help of CityGRID software (Figure 2). Various classifications and automatic and manual corrections were made until the 3D model contained the desired details. Based on architectural 3D CAD files (Figure 3), 3,800 landmarks such as mosques were modelled with greater geometric details of facades and roofs (LOD 3) than other buildings. Since no georeferenced photos taken at street level were available, no photo texture was draped over any of the buildings, including the landmarks (Figure 4). The non-textured LOD 3 models were based on the CityGRID XML format to facilitate the topologically correct outlining of roofs, facades, footprints and details such as balconies, dormers and chimneys. In a next step, the created files must be converted to a full CityGML structure.

Geospatial database

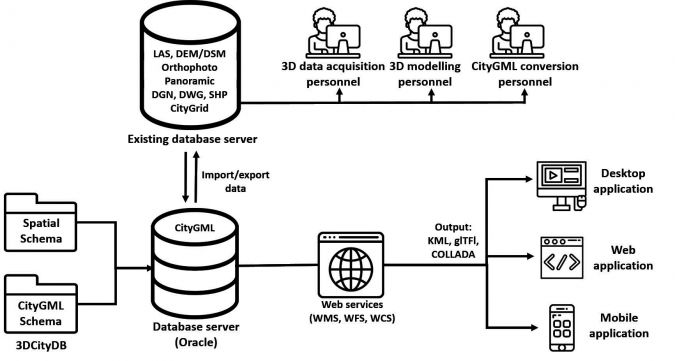

The database currently consumes 135 terabytes and all objects are stored in flat files. However, advanced use of the CityGML spatial database, which properly supports urban planning, requires its querying and visualization. One of the options for querying is the open-source 3DCityDB Webclient, which is CityGML compliant, used within Oracle Spatial, PostgresSQL or PostGIS. For visualization purposes, the authors plan to use Cesium Virtual Globe – an open-source JavaScript for creating 3D globes and maps. The proposed system architecture is shown in Figure 5.

Future

The creation of the 3D city model of Istanbul is still work in progress. Presently, the main data sources consist of an airborne Lidar point cloud, simultaneously recorded aerial images and the building footprints from the 2D base map of Istanbul. Ground-based data collection has been scheduled to increase the level of detail, with respect to both the geometry and the image texture. The preferred technology is laser scanning and 360⁰ panoramic imaging simultaneously captured from a moving car. Many streets in downtown Istanbul are small and narrow and thus inaccessible for cars. It is planned to capture these parts of the city with backpack mobile mapping systems. For the whole of Istanbul, the ground-based data will cover 32,000 kilometres of roads and streets resulting in 2.73 petabytes of panoramic image data. The 3D city model is not yet connected to a database containing semantic building information, but this is part of the future development work.

LOD

(By Mathias Lemmens)

3D city models consist of digital elevation models (DEMs) of the ground surface overlaid with structure and texture of buildings and possibly other objects. Such models may be made at five levels of detail (LOD). The simplest level is LOD 0: a DEM with superimposed ortho-rectified aerial or satellite imagery or a map. At LOD 1, basic block-shaped depictions of buildings are placed over LOD 0. LOD 2 adds detailed roof shapes to LOD 1. LOD 3 represents further expansion by adding to LOD 2 structural elements of greater detail, such as facades and pillars, and draping all objects with photo texture. The highest level, LOD 4, is achieved when buildings can be ‘visited’ virtually and viewed from the inside.

Further Reading

Biljecki, F. (2017). Level of Details in 3D City Models. PhD thesis, TU Delft, The Netherlands, 353 p.

Buyuksalih, G. (2015). Largest 3D city model ever – case study: Istanbul, Turkey. User presentation at RIEGL Lidar 2015, Hongkong-Guangzhou, China.

Kolbe, T. (2015). CityGML goes to Broadway. Photogrammetric Week 2015, Stuttgart, Germany.

Prandi, F., Devigili, F., Soave, M., Di Staso, U., and De Amicis, E. (2015). 3D Web visualization of huge CityGML models. ISPRS Archives Vol. XL-3W3, pp. 601-605

Acknowledgements

All the efforts and help on data collection and processing received from the BIMTAS colleagues – Mr Serdar Bayburt and Dr Ismail Büyüksalih especially – is greatly acknowledged. Thanks also go to Mr Hanis Rashidan for the design of Figure 5.

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)