Automatic Object Detection in Point Clouds

Classification Approaches and Method Comparison

Point clouds are used as a data source for mapping tasks in various application fields. But when it comes to automatic object detection, what are the various classification approaches and challenges?

Before an object can be mapped, it needs to be detected in the point cloud, preferably by automatic means. The development of detection methods is a complex task due to the diversity of objects, the random structure of point clouds, and the different characteristics of point clouds created by airborne, mobile or static systems or image matching. This article introduces classification approaches for automatic object detection and highlights several challenges related to the topic.

Automatic object detection in point clouds is done by separating points into different classes in a process referred to as ‘classification’ or ‘filtering’. The types of objects, and thus the classes, depend mainly on the application for which the point cloud was collected. For example, the classes for a power line maintenance project will be different from the classes of a road maintenance or a city mapping project. This article discusses two classification approaches: point-based and group-based classification. Point-based classification means that the software looks at one point at a time and analyses the attributes of the point, its connection with points in its closest environment or its relationship to a reference element. For group-based classification, points are first assigned to groups in a process that is sometimes also called ‘segmentation’. Then, the software looks at the group and analyses the group geometry and attributes, the similarity to sample groups, or the relationship to other groups or a reference element.

Point-based Classification

Classification based on single points uses attributes that are collected and stored for each point, such as the coordinate values, intensity, time stamp, scan angle or return type and number. Additional attributes may be derived during the processing workflow, for example a distance-from-ground value, normal vector, colour values extracted from images, or vegetation index. At a higher level of classification routines, the geometrical connections between points in the point cloud are considered. The analysis of point-to-point relationships determines whether a point belongs to a surface-like structure, such as the ground, or to linear structures, such as overhead wires. Single isolated points can also be detected by comparing a point to its closest environment.

Different types of reference elements can support the point cloud filtering task. The trajectory determines the scanner position at a certain point in time, which enables the classification of points based on their range or angle from the scanner. Cross sections of tunnels or clearance areas are used for detecting points falling inside or outside a section. Finally, vector data representing topographic objects allows a detailed classification of point clouds. Examples are boundary polygons for classifying points inside or outside of a specific area (e.g. water areas), and centre line elements of corridors for classifying points within a buffer area around the linear element (e.g. points along power lines, railways or roads).

Group-based Classification

The group-based classification approach goes further by analysing not only point-to-point relationships but also geometrical characteristics within and between point groups. The distance above the ground of a group, the planarity of points in a group, the shape and width-to-height ratio of a group, the point density and distribution within a group, and the distance between point groups all determine whether the group most likely represents a lamp post, a tree crown, a car, a building roof, a wall or another object of interest. The statistical analysis of point attribute values in a group leads to additional information for classification tasks. Specific object types may be represented with typical attributes in point clouds, such as dominating colour channel or intensity values. A common example is the separation of coniferous and deciduous trees by using near-infrared colour values.

The detection of objects in a point cloud can be supported by the use of group samples. The sample represents a typical entity of an object type, such as a street lamp or a pole. In the detection process, the software compares a group with samples stored in a library and assigns the corresponding class.

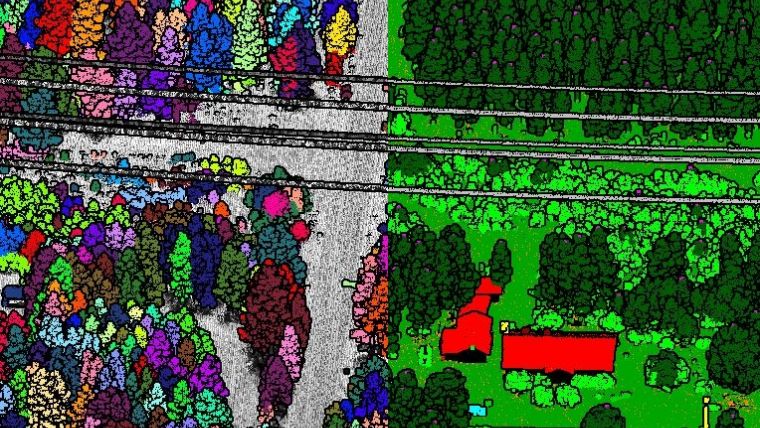

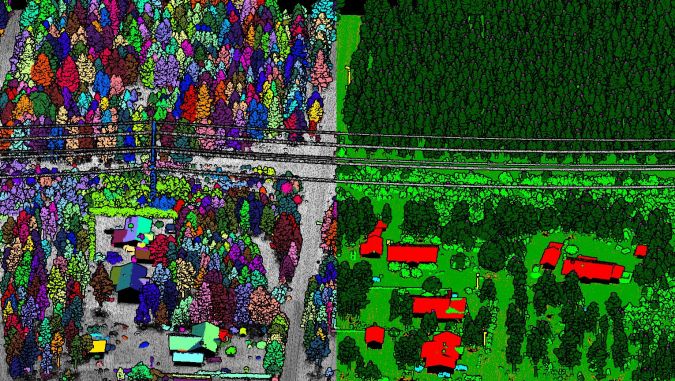

Reference elements can be used for the classification of point groups in a similar way as for single points. There are more options for defining the relationship between a group and the reference element. For example, inside a boundary polygon may be the entire group of points, the majority of them or just a number of points. The classification of key points in groups, such as the highest, lowest and/or centre point of a group, can be useful for analysis tasks. A typical example could be the detection of tree heights, which are mapped by the highest points of groups representing trees (Figure 1).

Method Comparison

The group-based classification approach has clear advantages for the automatic detection of above-ground objects in point clouds. Point groups provide information about the geometry and other characteristics of an object. By analysing the information, automatic filtering routines can directly assign a group to a specific object class. In addition, the comparison of groups to group samples enables the discovery of objects of the same type in the point cloud.

In contrast, the point-based classification approach seldom relates the points directly to an object type. Attributes of a single point are most often not object-specific due to the diverse and random nature of point clouds. Thus, the approach is suitable for the detection of isolated points, surface-like and linear structures, but very limited for automatic above-ground object detection.

The processing effort is lower for point-based classification. It can be started directly after internal positional errors are corrected in the point cloud. The group-based approach relies on group assignment before the classification of the point cloud starts. Therefore, the classification result depends mainly on the quality of the grouping.

Challenges

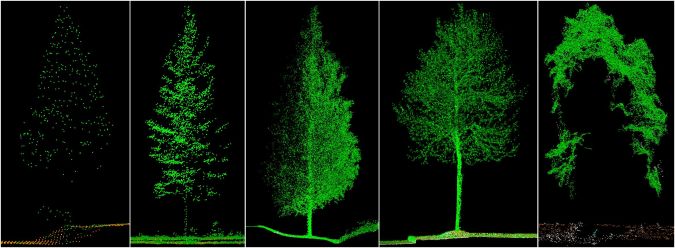

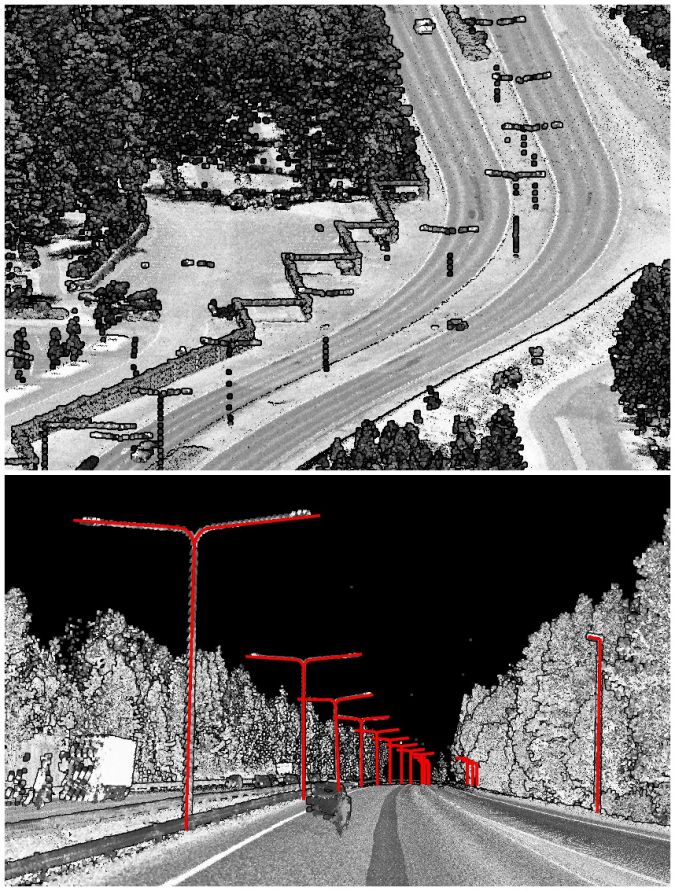

Point clouds collected with different scanner systems or created by image matching software represent the same object type in different ways regarding point density, viewing angle, sharpness and so on. For example, in an airborne point cloud a tree is mainly represented by its crown seen from above. Points from inside the tree crown and the ground around the tree may be included if the point cloud is dense enough and if the laser beam was able to penetrate the crown. In a mobile point cloud, a tree is seen from the side and from below the tree crown. Therefore, much more information is available about the tree trunk, limb and crown structure, but not necessarily about the top of the tree crown. In an image matching point cloud, most often only the outer layer of a tree is represented without clear information about the tree shape and structure (Figure 2). The smaller or thinner an object is, the more dependent the detection ability is on the point cloud density and viewing angle of the scanner. While poles along roads and railways are easy to detect in dense mobile point clouds, they are hardly detectable in less dense airborne point clouds where the scanner does not always capture the vertical part of a pole (Figure 3).

Another challenge for automatic object detection is the variety of object types that are present in point clouds of different application fields. Furthermore, the same object type may look very different in different countries and regions of the world. This applies not only to natural objects, such as trees, but also to man-made objects like buildings, road and railway furniture, power line towers and so on. Automatic detection algorithms therefore have to be flexible to cope with many different object types in various point cloud types. Predefined libraries with sample objects can only provide a starting point for country-specific, region-specific and application-specific extensions. Machine learning and artificial intelligence methods seem to be promising for improving the automatic object detection in point clouds in the future.

Further Reading

http://www.terrasolid.com/download/presentations/2017/classification_using_groups.pdf

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)