Deconstructing digital cultural heritage

Segmenting and annotating multi-scalar 3D heritage data

Whether as 2D drawings or 3D point clouds, digital cultural heritage is becoming the norm in heritage documentation nowadays. However, raw digital data still requires manual labelling in order to add tangible information to the otherwise strictly geometric data. This article presents an algorithmic approach to systematically deconstructing and semantically annotating 3D heritage data into distinct elements. The developed method helps alleviate labelling tasks and feeds training data for future developments in artificial intelligence-based segmentation.

The importance of proper heritage documentation continues to increase in the face of potential damage by both natural and human threats. In recent decades, developments in geomatics have made 3D scanning easier and more feasible for conservation actors. Great strides have been achieved in the field of photogrammetry and laser scanning, two of the most dominant forms of 3D data generation in the heritage documentation domain. In support of heritage management, systems such as 3D GIS and heritage building information modelling (HBIM) have also been developed.

Tackling the two main bottlenecks

The main bottleneck in this process of creating a unified multi-stakeholder heritage management system is the manual digitization and labelling of elements on the point cloud. This is necessary in order to properly understand the scene and thus perform meaningful analysis through the 3D GIS or HBIM frameworks. While significant progress can also be observed in the use of artificial intelligence in performing these tasks, this too encounters a bottleneck in the form of the availability of training data. Furthermore, in the heritage domain, the diversity of architectural styles around the world presents an additional layer to this problem. The developed approach attempts to tackle these two bottlenecks at the same time. Firstly, the use of algorithmic or rules-based approaches generates labelled datasets rapidly and precisely enough for certain simpler case studies. Secondly, results can thereafter be reused for training data as a sort of boot-strapping method in any future machine learning – or indeed deep learning-based – approaches.

Quality control

Prior to performing operations on the point cloud itself, a proper quality control is necessary. This aspect of 3D heritage documentation is essential, yet in practice often forgotten. While visual aspects may enamour users, geometric precision is nevertheless an integral part of any heritage archiving effort. Indeed, surveyors and geomatics engineers have the obligation to uphold the geometric quality of geospatial products, and even more so when they must guarantee the use of the said products for long-term heritage archiving.

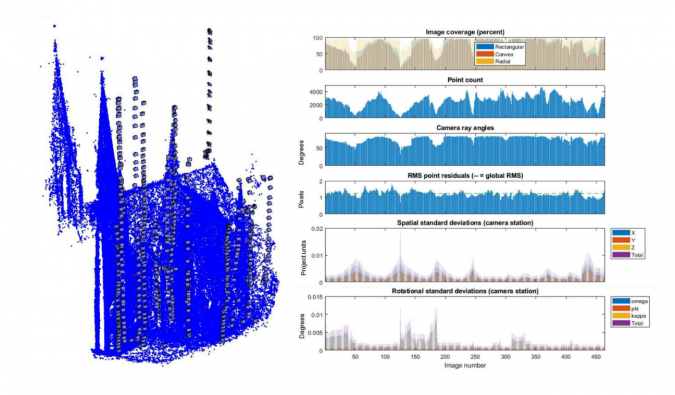

In photogrammetry, for example, the rise of structure from motion (SfM) and dense matching algorithms produces visually impressive results. However, the black-box nature of SfM software means that quality control is more elusive to perform. To address this problem, the author and his team developed a protocol to extract quality metrics from SfM photogrammetry projects using the damped bundle adjustment toolbox (DBAT) (see Figure 1 for an example). Using this open-source method for photogrammetric quality assessment enables problems in the project to be identified early and therefore rectified before the error propagates further. Amongst other things, DBAT provides classical photogrammetric metrics which are often missing in modern black-box SfM-based 3D reconstruction software, e.g. external orientation covariance matrix, intersection angles, etc.

Multi-scalar division

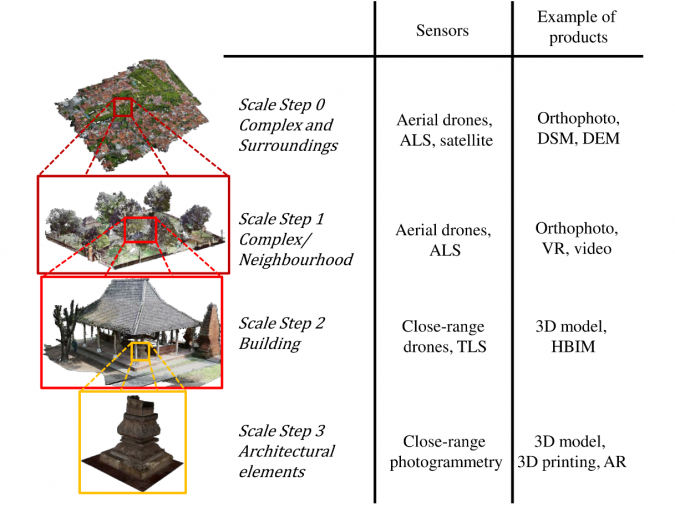

After the quality of the project has been guaranteed, the resulting point cloud may be manipulated further. The first step in the author’s developed approach is to systematically divide 3D heritage data into multiple scale steps. For example, the first scale step involves large areas surrounding a certain site which may be scanned by unmanned aerial vehicles (UAVs or ‘drones’). The second step may then concern single buildings, which may be reconstructed by terrestrial laser scanning (TLS) or photogrammetry. A third step may then involve more detailed parts of the building, i.e. architectural elements such as pillars, walls, floors, etc. This coarse-to-fine segmentation approach helps the algorithmic functions to create a systematic hierarchy of semantic information, as well as greatly reduce processing time. Figure 2 illustrates this multi-scalar division on a case study performed on the 16th-century Kasepuhan palatial complex in Cirebon, Indonesia.

The multi-scalar approach also attempts to accommodate the various 3D sensors available today. Indeed, each method may be suited for a certain scale level but not for others, so a hierarchical structuring of this data can also be useful for data management purposes.

Segmentation and annotation

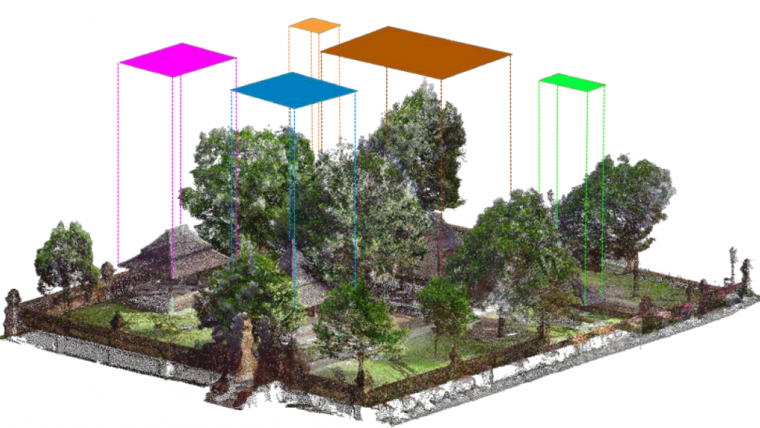

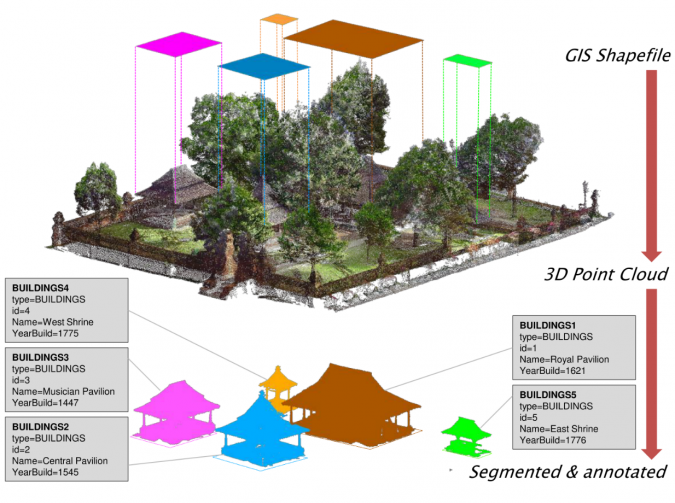

Following the multi-scalar paradigm which has been previously established, the deconstruction then starts with the segmentation of buildings from a larger point cloud, i.e. that of the surrounding complex. This was performed with the help of pre-existing 2D GIS files. Such GIS files are often already available for heritage sites, but a quick vectorization based on satellite or UAV images is also possible if such data is absent. The use of GIS also presents another advantage: semantic class and information are embedded as attributes in GIS layers and entities. The developed ‘cookie-cutter’ algorithm thus performs geometric segmentation using the GIS vector polygons as guides. The semantic attribute linked to each polygon entity may thereafter be annotated automatically to the segmented result. Figure 3 shows the result of such an operation, whereby the GIS vector acted as a 2.5D mould to segment the raw point cloud. Automatic labelling using GIS attributes means that the resulting building point clouds are queryable in the basic database sense.

The result of this segmentation – from heritage complex to heritage buildings – consists therefore of individual buildings with semantic information derived from the original GIS file and geometric data from the original point cloud. In this regard, it presents an example of instance segmentation as opposed to the more general semantic segmentation. As far as the segmentation quality is concerned, the author’s algorithm scored an F1 index of 89.32% on the tested dataset using this approach.

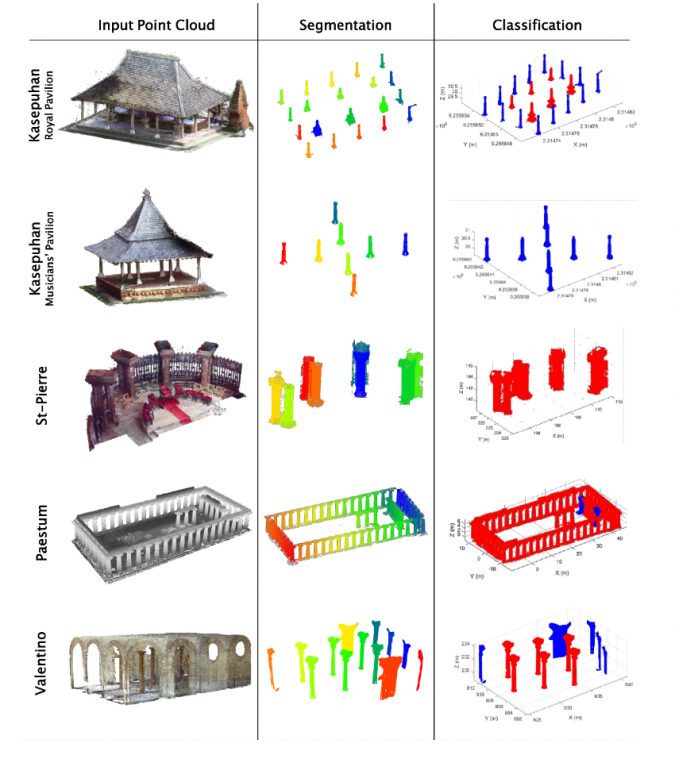

Further deconstruction concerns the disassembling of the buildings (results of the previous step) into architectural elements. Various geometric rules were used in the process, not only to perform simple segmentation but also to attribute classes to each instance. The author and his team developed several functions to perform the detection and classification of two architectural elements commonly found throughout heritage data, e.g. pillars/columns and roof frames. The detection algorithm is based on several algorithms such as RANSAC, Hough-Transform and nearest-neighbour segmentation. The pillar detection algorithm was tested on five different datasets with various architectural styles, and successfully performed the task with an average F1 score of 88.97%. It also managed to correctly determine the number of ‘pillars’ (defined as building supports having a circular cross-section, shown in red in the ‘classification’ column of Figure 4) as opposed to ‘non-pillars’ (shown in blue in the ‘classification’ column of Figure 4).

Concluding remarks

The approach described in this article had the final objective of aiding the process of point cloud segmentation and classification in the context of cultural heritage documentation. In this regard, the developed algorithm showed promising results with surprisingly good accuracy. Indeed, the use of this type of heuristic approach may well be sufficient in many simple cases, without the need to resort to machine learning. Furthermore, the author proposes a thorough workflow with the inclusion of geometric quality assessment at the beginning of the process. However, the algorithm’s efficacy may encounter more constraints when dealing with a more diverse dataset, which is the case in the cultural heritage domain. It is therefore also interesting to explore the use of this type of algorithmic approach to point cloud segmentation in generating training datasets which would be useful to support artificial intelligence-based approaches in the future.

Acknowledgments

This research is part of a PhD dissertation financed by the Indonesian Endowment Fund for Education (LPDP). Thanks are also due to the Bandung Institute of Technology (Indonesia), Politecnico di Torino (Italy) and FBK Trento (Italy) who agreed to share their datasets for the tests.

Further reading

Murtiyoso, A.; Grussenmeyer, P. Virtual Disassembling of Historical Edifices: Experiments and Assessments of an Automatic Approach for Classifying Multi-Scalar Point Clouds into Architectural Elements. Sensors 2020, 20, 2161. https://doi.org/10.3390/s20082161

Murtiyoso, A.; Grussenmeyer, P.; Suwardhi, D.; Awalludin, R. Multi-Scale and Multi-Sensor 3D Documentation of Heritage Complexes in Urban Areas. ISPRS Int. J. Geo-Inf. 2018, 7, 483. https://doi.org/10.3390/ijgi7120483

Murtiyoso, A.; Grussenmeyer, P.; Börlin, N.; Vandermeerschen, J.; Freville, T. Open Source and Independent Methods for Bundle Adjustment Assessment in Close-Range UAV Photogrammetry. Drones 2018, 2, 3. https://doi.org/10.3390/drones2010003

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)