Realistic virtual reality environments from point clouds

Immersive and interactive surveying labs

How can point cloud technologies be used to create a realistic virtual environment for use as immersive and interactive surveying labs?

The advent of cost-effective head-mounted displays marked a new era in immersive virtual reality (VR) and sparked widespread applications in engineering, science and education. An integral component of any virtual reality application is the virtual environment. While some applications may have a completely imaginary virtual environment, others require the realistic recreation of a site or building. Relevant examples can be found in gaming, heritage site preservation and building information modelling. This article discusses how point cloud technologies were used to create a realistic virtual environment for use as immersive and interactive surveying labs.

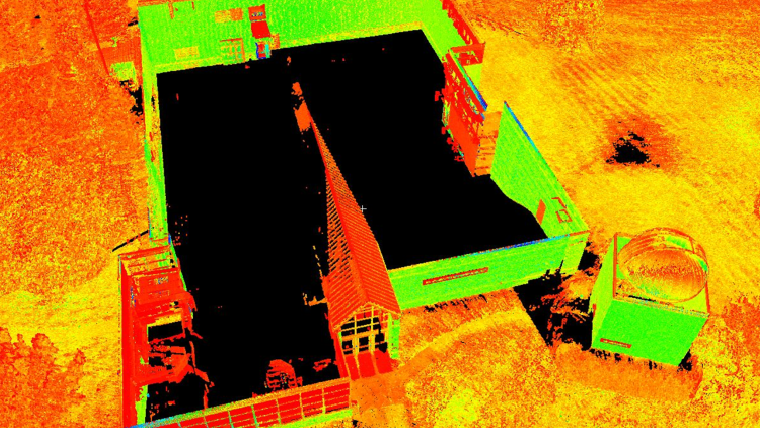

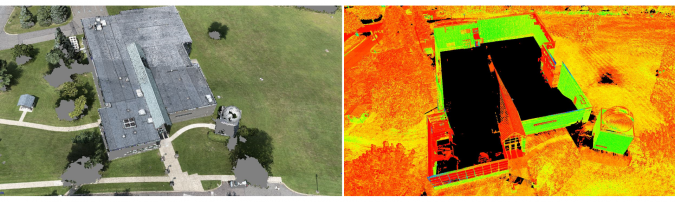

Creating a virtual environment that is based on a physical site necessitates geometric information to a high level of detail. Technologies such as unmanned aerial systems (UAS) and terrestrial laser scanning (TLS) generate dense and accurate 3D point clouds. Each method offers advantages and disadvantages that complement each other. For example, UAS maps at an altitude with a downward looking view angle, while scanners are set at vantage locations limited by line of sight obstructions. Identifying data gaps and merging their point clouds can create points that are more complete. For the purposes of our research, we re-created part of the Penn State Wilkes-Barre campus. We conducted UAS flights with nadir imaging and collected TLS scans from various locations, mostly around the building, and merged the point clouds through a custom merging algorithm. Figure 1 shows an example of the data gaps in the sUAS and TLS datasets. For example, in this case the UAS point cloud has gaps around tree areas and cannot capture vertical walls of the building. On the other hand, the TLS dataset cannot capture the top of the building, which is captured by the UAS dataset, but can capture the building’s vertical walls. The TLS dataset was used as the reference and consisted of 180 million points. The sUAS point cloud had 70 million points, and 25 million points were kept to fill gaps in the TLS dataset.

Terrain and structures modelling

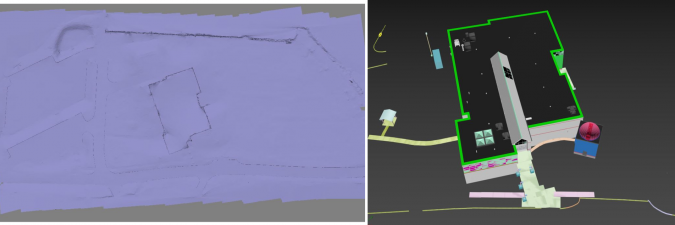

The terrain and any man-made structures can be modelled based on the merged point cloud. Using existing segmentation algorithms, it is relatively easy to separate the point cloud into ground and non-ground and create a terrain mesh from the ground points. While detail meshes of several million faces can be created, this introduces challenges to game engines (such as Unity and Unreal) that are often used to create virtual environments. For a pleasant virtual experience, it is important to always maintain a minimum of 60 frames per second (FPS), while other virtual reality hardware manufacturers often recommend at least 90 FPS. This means that detailed meshes of a few million faces need to be simplified to a few thousand without compromising the accuracy of the terrain. For example, in our implementation the initial terrain mesh had 4 million faces; however, when approaching complex areas (such as around the building), the FPS dropped to less than 60 FPS. We simplified the terrain and used 50,000 faces with a difference of 5cm in root mean square error (RMSE). Figure 2 (left) shows this simplified terrain. The terrain mesh was then converted to a Unity Terrain to take advantage of Unity’s occlusion culling rendering process, which renders objects that are only visible in the user’s field of view, thus ensuring that 90 FPS is consistently maintained.

It was time-consuming to geometrically model man-made structures such as buildings and curbs. While some automatic shape detection algorithms exist, they detect primitive shapes such as boxes, spheres, cylinders, and so on. While these are sufficient for applications that do not require a high level of modelling detail, this is not acceptable for immersive virtual environments. Consider that a building has complex windows and door structures, arched walkways, handrails, parking lot stripes, letter markings on asphalt (e.g. ‘STOP’), and so on. In our case, we modelled man-made objects by hand from the point cloud, which was laborious. Figure 2 (right) shows an example of these 3D models in 3DS Max. We compared the accuracy of our modelled 3D structures with 93 checkpoints on building corners and parking lot stripes, which showed an agreement within 10-20cm. Improved shape detection algorithms in the future will enhance the automation of structure extraction from point clouds, making it an easier process. For trees, a different approach was followed. We used a tree random generator (from Unity’s Asset store) and placed them on the corresponding locations, identified from the merged point cloud.

Textures

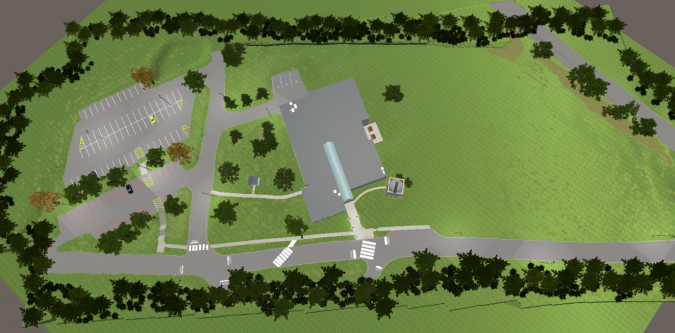

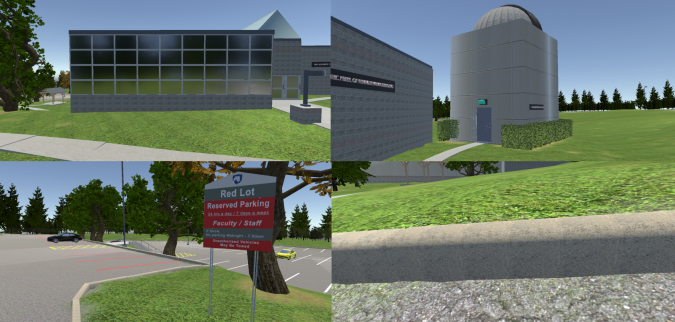

Textures are an essential component of virtual environments. Creating and applying textures that look like their physical counterparts creates a sense of realism. While point clouds give important geometric information, pictures taken from UAS and TLS do not have sufficient resolution to create textures. As users can come very close to 3D objects in virtual reality, they will be able to see the pixels from the aerial imagery. Built-in materials in game development software can be used if they are similiar enough to their physical counterparts, which can be an easy solution. This is the case for asphalt and grass textures. However, some physical objects have unique and complicated patterns, so that textures need to be developed from scratch. For example, exterior building walls often have unique patterns. To create such textures, we used close-up photography and created patterns that resemble the physical ones in Photoshop. It is important that these textures are accompanied by normal maps that give an illusion of depth in virtual reality. From these textures, we created materials that were then applied to the 3D objects. Figure 3 shows a top view of the virtual environment we created, while Figure 4 shows close-up examples of our textures. Of note are the custom textures in the buildings and the surrounding environment. For instance, in Figure 4 (top left), the windows were made reflective and in Figure 4 (bottom left), the parking lot sign was faithfully recreated. In addition, we added ambient sounds such as birds and the movement of tree branches with wind and rustling leaves. These add a sense of realism, which are important to create a sense of immersion.

Research results

This article presents a workflow on how to utilize point cloud technologies to create realistic virtual environments. We plan to use such realistic environments in surveying labs in immersive and interactive virtual reality. Merging point clouds generated from different acquisition methods allows for the acquisition of complete and high-detail geometric information. 3D modelling and texturing are important steps to make the environment realistic, but these processes take time and effort. Virtual reality technology is rapidly advancing and is quickly finding its way into surveying and engineering, increasing the demand for replicating physical environments to virtual ones, and opening the door for new professional endeavours.

Acknowledgements

This project has been supported via grants from the following sources: Schreyer Institute for Teaching Excellence of the Pennsylvania State University; the Engineering Technology and Commonwealth Engineering of the Pennsylvania State University; Chancellor Endowments from Penn State Wilkes-Barre. Students John Chapman, Vincent F. Pavill IV, Eric Williams, Joe Fioti, Donovan Gaffney, Malcolm Sciandra, Courtney Snow and adjunct Brandi Brace are acknowledged for their involvement in this research.

References

Bolkas, D., Chiampi, J., Chapman, J., & Pavill, V. F. (2020). Creating a virtual reality environment with a fusion of sUAS and TLS point-clouds. International Journal of Image and Data Fusion, 1-26.

Bolkas, D., Chiampi, J., Chapman, J., Fioti, J., & Pavill IV, V. F. (2020). Creating Immersive And Interactive Surveying Laboratories In Virtual Reality: A Differential Leveling Example. ISPRS Annals of Photogrammetry, Remote Sensing & Spatial Information Sciences, 5(5).

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)