Recent trends in four Lidar technologies

Providing a complete Lidar-based point cloud solution

Lidar technology is synonymous with 3D point clouds. To get the most out of 3D point cloud data, it is necessary to step back and continually consider the newest trends in Lidar technology and how they are benefiting end-users of the data. This article explores the latest developments.

Within the spatial industry there are four popular platforms for producing point clouds: 1) Airborne Lidar which captures data from above using aircraft and unmanned aerial vehicles (UAVs or ‘drones’), 2) Mobile Lidar mounted on moving vehicles including road, rail and boats, 3) Terrestrial Lidar captured from a static platform typically using a tripod, and 4) Handheld, short-range Lidar using SLAM technology.

Each platform plays an important role in generating Lidar-captured point clouds and each one offers a unique perspective on the world – from airborne which can be captured at scale across cities to handheld SLAM for rapidly capturing detail within indoor spaces.

Airborne Lidar

In recent times the biggest gains for airborne Lidar have been in the processing and delivery of 3D point clouds. There have also been developments in lightweight sensors for drones, along with drone autonomy. However, the progress made in automating cloud-based processing and storage has seen significant investment, resulting in substantial reductions in delivery times.

Maximum point densities have generally stabilized across the market, with improved customer knowledge having a greater influence on capture. The old approach of obtaining as much detail as possible is being reconsidered in favour of value and only as much detail as is needed. Customers are becoming more aware of what is possible through social media and, as a result, are more educated in the specifics of Lidar capture.

Data processing

As mentioned above, the biggest recent change for airborne Lidar has been in terms of data processing. This is now more automated and streamlined, typically using a fully cloud-based architecture, also including manual editing and quality assurance (QA) in the cloud. This cloud-based approach has resulted in significantly faster turnaround times and more versatility between the field and office. Project data can now be uploaded soon after the aircraft lands back on the tarmac and in some instances the post-processed data is also delivered in the cloud. As with all cloud-based solutions, the current challenge for most projects is bandwidth because adequate speeds are required to realize the benefits.

In Australia, there has been a general transition away from the large environmentally driven captures to more forestry and urban applications with annual refreshes. These captures are driving major programmes supporting bushfire management and the development of urban digital twins. Airborne Lidar has been fundamental to support the creation of high-quality digital elevation models (DEMs) to underpin the digital twin landscape and to derive high-quality, up-to-date building models. These applications have meant that data currency is becoming one of the most important factors for Lidar capture.

Forestry-based Lidar is increasing commensurately with concerns around bushfire risk. The calculation of fuel loads for bushfire predictions is in great demand within Australia. Applications such as coastal erosion and sea-level rise have been reduced from large-scale programmes to more localized surveys over hotspots.

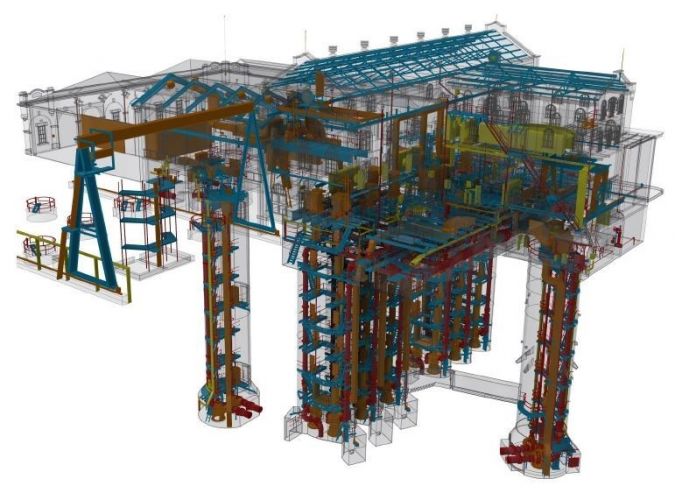

Digital twins

The focus on urban areas and digital twins, along with value for money, has meant a reduction in demand for full-waveform Lidar. The larger volumes of data and constant need to evolve data management have accelerated the transition to cloud-based storage and streaming as well as increasingly efficient data compression formats. The costs for processing and storing large volumes in the cloud can add up, and companies are getting smarter about obtaining only what they need.

In recent times, there has been a stronger push in communications between customers and suppliers. Customers are demanding more information about the data processing pipeline, and companies are providing more details about their approach to processing – whether it is automated, manual or outsourced – including their approach to quality assurance and editing.

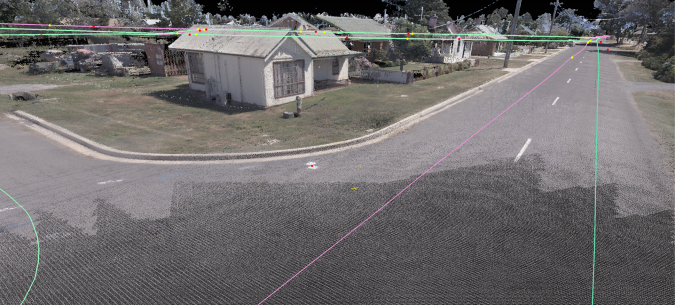

Mobile Lidar

Mobile Lidar has come a long way in the past few years. Mobile Lidar capture no longer needs a multitude of cables and accessories to be carefully pieced together by an expert. All the mapping-grade sensors such as those from Velodyne and Ouster and the survey-grade platforms from RIEGL, Trimble and Leica are now much easier to mobilize: virtually plug and play. Each sensor offers its own benefit, from a wide vertical field of view or longer ranges to multiple pulse returns or higher accuracies.

In mobile Lidar there has been an increase in point density, particularly when using dual scan heads to capture a more comprehensive view from a single pass. Systems such as the RIEGL VMX-2HA, Trimble MX9, Teledyne HS600 and the Leica Pegasus Two:Ultimate all have the ability to capture more than two million points per second with ranges from 100m to 500m-plus in order to achieve great detail.

The benefit of using two scanners simultaneously in a dual-head system is that more detail can now be captured from fewer passes of the road or within a rail corridor. This also means that data occlusion is reduced, and the object definition is improved as multiple angles of scan data are captured.

Challenging point densities

The point densities being generated from mobile Lidar are constantly challenging IT infrastructures. For instance, an hour of data capture can generate around 200GB of raw data even when point cloud and image compression are applied. A typical project can produce 100GB in an hour from eight 12MP cameras recording images at a 3m spacing and two Lidar sensors operating simultaneously at one million points per second each. The sheer size of the data produced becomes a game of transfer speeds and GPUs to achieve efficient point cloud and imagery processing.

Another challenge for mobile Lidar is the requirement for a high degree of survey control to be safely placed within the road corridor. Survey control usually involves strategically placed targets on the pavement and vertical surfaces, or alternatively utilizing terrestrial Lidar data to verify the accuracy of the mobile Lidar point cloud. With the faster turnaround times and the requirement to maintain safety standards, survey control is a constant challenge.

One of the latest advancements in mobile Lidar has been the integration of accurately aligned high-resolution imagery to the point cloud. The latest systems have an array of cameras with a combination of both spherical and directional cameras. Some systems such as the RIEGL VMX-2HA and Leica Pegasus Two:Ultimate use directional cameras of up to 12MP and can capture frames at speeds of up to 16FPS. This highly detailed and aligned imagery enables high-quality RGB colourized point clouds for improved visualization and analysis.

Less manual alignment

The processing of mobile Lidar point clouds has been streamlined in recent years with improved trajectory processing utilizing advances in Kalman filtering and image-based cloud-to-cloud registration. This has meant that much of the manual point cloud alignment and registration to ground control has been reduced, speeding up the overall processing to achieve less than 10mm separation between point cloud passes. Auto-alignment algorithms result in a more accurate product with far less human interaction. The improvement in data processing means that clients are able to start working on accurate data much sooner.

Machine-learning feature extraction is playing a significant role in extracting value from both airborne and mobile Lidar data. Whilst machine learning is taking off, there is still a need for more labelled training datasets suitable to each of the applications which can be used by the machine and data scientists to optimize algorithms. Labelled training datasets identifying objects within point clouds are becoming increasingly valuable. In point clouds, these objects are located by 3D bounding boxes around the objects and labelled with the object type. These training datasets provide the foundation for the training of machine learning to extract more value from point cloud data.

Terrestrial Lidar

Around 15 years ago, with all the hardware and software working properly, it was possible to achieve three scan setups per day using a Leica HDS3000. The scanner weighed 16kg, batteries were the size of briefcases and everything was controlled using a laptop computer – but it made processing cloud-to-cloud alignments easy.

In terrestrial Lidar, the hardware has progressed in leaps and bounds and the latest generation of scanners are able to achieve over 200 scan setups per day. Now, the challenge has become how to process all the data more efficiently.

With the increase in scan setups that can be achieved in a single field day, automated point cloud alignment algorithms provided a solution several years ago, in particular Trimble Realworks’ very effective ‘Auto-register using planes’. The drawbacks have been the computing time required to run the process and the need for rigorous quality checks to ensure the algorithm has got it right. Scanning data through glass, long featureless corridors and large moving objects such as sails or trees can all steer the alignment well off course.

On-the-fly alignment

Perhaps surprisingly in an industry where ‘automation’ is a byword for the future, the latest developments in terrestrial point cloud processing have been non-automated. On-the-fly cloud-to-cloud alignments using a Wi-Fi-connected tablet have virtually eliminated the need for the slow, tedious task of point cloud processing in registration software. Instead, the software now has the ability to align the point clouds in the field as data is acquired, overcoming the drawbacks of manual registration and allowing the operator to visually check the alignment prior to accepting.

Leica’s most recent terrestrial Lidar system, the RTC360, is a great example of on-the-fly alignments. The system can be operated either on the unit’s GUI or via a Wi-Fi-linked tablet device through the Leica Field 360 application. However, it should be noted that the point cloud alignment can only be done using the tablet. The point cloud acquisition only takes around 30 seconds (12mm point spacing at 10m), with the addition of colour HDR imagery taking an extra minute.

While the tablet-based alignments eat into the scan acquisition time, the improvements in point cloud and HDR imagery times easily offset this delay. Unfortunately, at this stage the operator is unable to utilize the downtime during acquisition to complete past alignments. However, it is easy to envisage this being addressed in future firmware updates.

Additional benefits to the align-as-you-go workflow include:

- The operator can show the client the aligned data in real time on the tablet device, creating greater appreciation and engagement with the work being conducted.

- A virtual floorplan showing the scan locations and voids in the field avoids potential re-work.

- The additional processing in the field removes the need for detailed fieldnotes showing locations of scans.

- The user receives a prompt if the application is unable to align the adjacent scans due to insufficient overlap between setups.

Although this may make the office-based cloud-to-cloud alignments a thing of the past, there is still a need to have robust survey control and rigorous data quality-assurance processes in place. Data integrity always requires the support from traditional survey techniques such as survey control and reference targets.

Handheld, short-range Lidar using SLAM

The fourth and final commonly used commercial platform for Lidar is the handheld/backpack short-range Lidar using simultaneous location and mapping (SLAM) technology. SLAM enables the Lidar system to position itself within its environment – typically GNSS-denied locations. As SLAM algorithms have improved over the past couple of years, handheld Lidar has taken off as a commercial solution.

Terrestrial Lidar was the only commonly applied solution for indoor Lidar prior to commercially available handheld devices. The success of Leica’s BLK2GO and Emesent’s Hovermap have revolutionized the acquisition of Lidar in confined, complex indoor environments. The advantages of these solutions are their speed, versatility and ease of use. Where applications can tolerate lower accuracies, they gain significant advantages in the speed of capture, processing and delivery timeframes.

In combination with drone autonomy

The Emesent Hovermap also has the versatility of being able to be attached to a drone. In this mode, combining the SLAM technology with drone autonomy provides a powerful indoor mapping solution for mining and complex enclosed sites. In fact, Hovermap’s autonomy level 2 uses collision avoidance to enable the drone to fly beyond the line of sight and the Lidar data is streamed back to the operator in real time.

In the next few years, significantly more handheld SLAM-based Lidar systems can be expected to enter the market.

Conclusion

In recent years, there has been a strong industry focus on increasing the speed of delivering Lidar data to the customer. Factors such as the uploading of data from the field to cloud, auto-alignment of scans, product automation and delivery through the browser have meant that end users are receiving their data faster. With the push towards digital twins, there is now more interest in complete coverage of buildings and assets utilizing a range of integrated Lidar technologies. Recent developments improving the versatility of Lidar across its many platforms have made it easier than ever to provide a complete Lidar-based point cloud solution.

Acknowledgements

The authors would like to thank Rob Clout (Aerometrex) as well as Rick Frisina and Jarrod Hatfield (Victorian Department of Environment, Land, Water and Planning) for their valuable insights.

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)