What Are the Main Reasons for Choosing UAV-based Lidar Mapping?

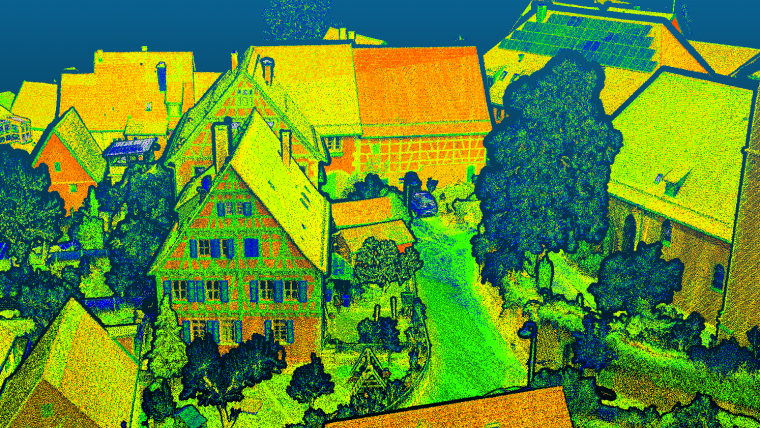

Lidar mapping based on unmanned aerial vehicles (UAVs or ‘drones’) can generally be thought of as a close-range version of manned airborne laser scanning. In brief, lower mobilization costs and much higher spatial resolution are allowing new Lidar mapping use cases in several disciplines, such as forestry, bathymetry, archaeology, infrastructure mapping, hazard management and so on.

In general, modern UAV-based Lidar solutions fall into one of the following categories:

- Survey-grade sensors featuring narrow laser beams with a beam divergence of <1 mrad, high pulse repetition rates in the MHz range and sophisticated full waveform-based signal processing. These high-end sensors weigh around 1 to 4kg, are typically integrated on multi-copter UAV platforms and are employed whenever both the utmost spatial resolution of more 500 points/m2 and measurement precision in the mm range are required.

- Ultralight sensors weighing <1kg and typically integrated on either multi-copter or fixed-wing UAV platforms. The lower weight comes with a longer flight endurance and consequently with a higher areal measurement performance. Such sensors are the first choice if (i) decimetre accuracy is sufficient, and (ii) beyond visual line of sight (BVLOS) operation is legally permitted.

- Flash Lidar time-of-flight (ToF) cameras. These are the cheapest, most lightweight but at the same time least accurate systems on the market. Flash Lidar cameras are mainly used for applications like collision avoidance, object tracking and augmented reality rather than for traditional mapping tasks.

Compared to UAV photogrammetry using passive RGB camera sensors, survey-grade UAV-Lidar features multi-target capability (i.e. multiple echoes per laser shot) resulting in penetration of semi-transparent objects like vegetation. It also delivers precise geometry as well as radiometry (signal strength). As an active measurement technique, no texture is required and even night-time operation is possible given the respective flight permission. However, compared to image-based UAV mapping, UAV-Lidar relies on direct georeferencing and therefore requires appropriate GNSS/IMU hardware. As opposed to dense image matching, each point of the resulting high-resolution 3D point cloud is individually measured so the follow-up products like DEMs are more reliable. State-of-the-art sensors provide passive cameras and active laser scanners on the same UAV platform, achieving the best of both worlds via data fusion.

Gottfried Mandlburger is a senior researcher in the photogrammetry research group within the Department of Geodesy and Geoinformation at TU Wien.

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)