Effective Use of Geospatial Big Data

Server Solutions Hold the Key

This article was originally published in Geomatics World.

To deal with today's unprecedented quantities of big data, mission-critical geospatial analysis systems require purposely designed software that has been tested in the most demanding environments.

The heart of any geospatial analysis system, regardless of its location or configuration, is increasingly becoming the server. All systems face a similar challenge, whether the system is in the ‘cloud’, a secure data centre or on a single machine running in an office. This challenge is primarily the ability to deal with the ever-increasing quantities and variety of data the world now produces at an unprecedented rate. This article explains how, for mission-critical systems, purposely designed software is required that has been tested in the most demanding environments.

Both commercial and government organisations recognise the enormous fiscal, operational and social benefits of utilising their geospatial data for analysis. However, because the volume, variety and velocity of the data is continually expanding, it generates increasing problems for those tasked with the storage, analysis and serving of the information within an organisation. In addition, companies are experiencing challenges related to the many new sensor platforms that are emerging, many of which did not exist a few years ago and which must be incorporated into future GIS applications.

‘Maps gone digital’ Mindset Disables

It is therefore vital that when an organisation is considering a new system, it has the ability to deal with the ever rising amount and sorts of data, whether this data is coming from georeferenced social media posts, high-resolution satellite imagery or smart energy metres. Legacy technology based on the ‘maps gone digital’ mindset is unable to provide the visual quality, speed and accuracy necessary to run on platforms as diverse as Windows, Linux, Amazon AWS, a Docker Container or even be deployable from a USB stick if needed.

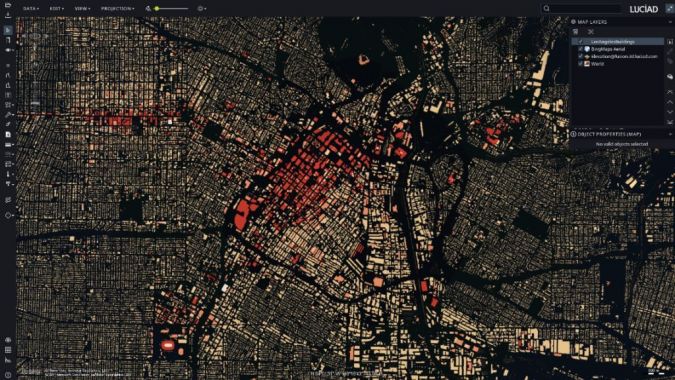

Organisations today need the industry standard system foundations for truly interactive solutions capable of analysing and visualising video, photographic, unstructured text and many forms of legacy data real-time and in a secure environment. Equally, systems should ideally be based on proven technology, and be extensively tested within the demanding mission critical defence and aerospace sectors - the most extreme and demanding of all software operational environments. In essence, to deliver performance and accuracy without compromise for the analysis of geospatial Big Data and the location information that flows from the Internet of Things, purposely designed software is required. Try doing it cheaper and you only end up wasting money.

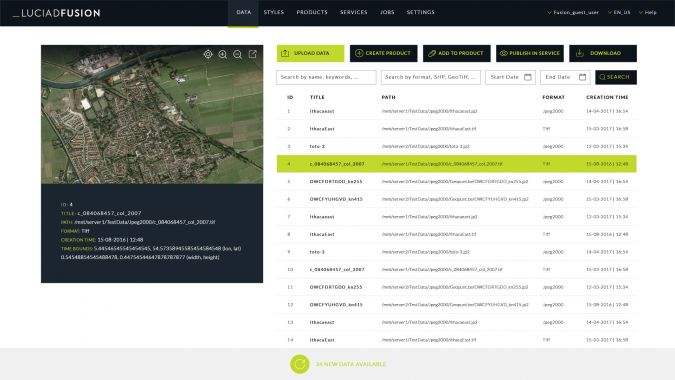

For those who only have experience of legacy systems, a single unified and secure future-proof server solution for data publication workflow and geospatial data management is most in demand. This is because these kinds of systems enable users to manage their data intelligently, store and process a multitude of data formats and feed data into numerous applications with varying levels of security. Features including powerful automatic cataloging as well as quick and easy data publishing are also in demand. This allows users to design, portray, process and set up advanced 3D maps in a few simple clicks.

These are requirements and demands that we have seen at Luciad over the past few years, both through our work with some of the most demanding Big Data users such as NATO and EUROCONTROL and through our work with commercial organisations such as Oracle and Engie Ineo. So, what does a geospatial server solution capable of dealing with these challenges and satisfying these demands actually require?

Spatial Server Solutions Requirements

First, they should be able to connect directly to a multitude of geographic data formats such as IBM DB2, OGC GeoPackage, Oracle Spatial, SAP HANA and Microsoft SQL Server. This is an essential part of ensuring that they can cope with the explosion of formats that the rise of Big Data has prompted. It is also essential that these server systems move away from the Extract – Transform - Load paradigm and avoid converting the data into a fixed high cost proprietary format before analysis. This retention of the original format is recognised as the only method that ensures both high speed and accuracy of processing when dealing with the growing, dynamic datasets that now are the norm.

Second, in situations where a user has a large quantity of high-volume – high-quality geospatial data that needs to be published to an OGC standard, this must be achieved with a few clicks. This avoids complex, risky and time consuming pre-processing of the data or custom software code. The same ease of use is required with other common formats like ECDIS Maritime data, Shape, KML, and GeoTiff formats among many others. It is vital that this data can be accessed and represented in any coordinate reference system (geodetic, geocentric, topocentric, grid) and in any projection while performing advanced geodetic calculations, transformations and ortho-rectification. This is especially crucial of datasets such as weather and satellite information, which includes detailed temporal references and high-resolution video files that need to be visualised in 3D to include ground elevation data and moving objects.

Help

The demands of users, however, do go beyond the server solution itself. At the heart of any decision regarding a major GIS IT or technology purchase should be an understanding of the support, training and help that will be required, plus a firm written commitment from the supplier regarding backwards compatibility. The user should also be aware of and involved with the development roadmap; a roadmap that should be driven by the needs and wishes of both the end user and the developer community.

This is something which is a recognised and concerning weakness of Open Source software, most of which offer near non-existent help and training. What training there is may well be coming from individuals with no relevant qualifications or skills and who are often all located in one global time zone, which delays responses. Where lives matter, such as in mission critical environments, Open Source is a risk. It is vital that advanced in-person and online training is available from those individuals who have an intimate close working knowledge of the software and a relationship with the original coding team backed up by detailed manuals and code examples. Training and support should only be from subject matter experts who understand the time critical commercial challenges of business. They should also be aware of the planned development pathways for the software and the possibilities for custom code if needed for one-off projects with unique requirements.

Technology Advances

Giving users and developers the tools to extend the solution that they have is also essential. As has been mentioned, new data formats may be introduced, and requirements may change as technology advances. This necessitates putting together a user guide that delivers clear explanations and descriptions of best practices, along with API references. They offer a detailed description of all interfaces and classes to ensure a new user is able to seamlessly add new data formats and sources as needed for a project. As an example, the development of a Common Operating Picture will require a combination of imagery, military symbology, NVG files,radar feeds and always changing types of other data, in one system, in near real-time and with the minimum of delay.

GIS can help build the future of a community, assist in the security of a country, form the basis of a mission planning system and can open new avenues of revenue and profit for a company. However, this can only be realised if the right system is specified and purchased. Too many organisations have wasted time, money and resources attempting to save money or cut back on the initial assessment requirements. Research shows that systems proven in the crucible of deployed operations have the robustness required to deliver in other markets.

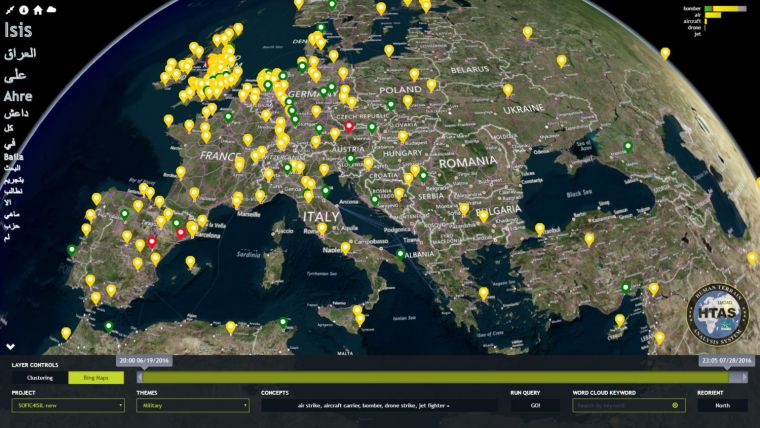

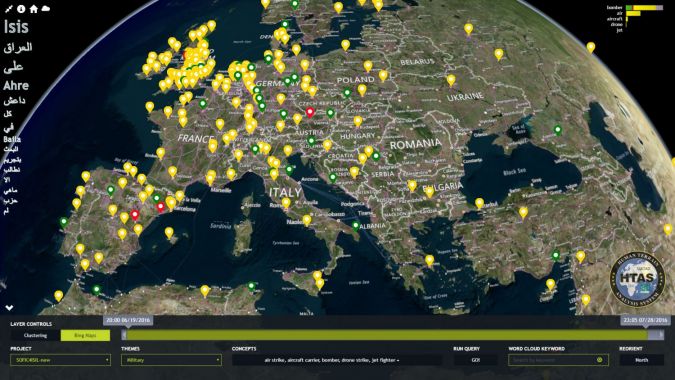

One partner of Luciad is Sc2 Corp. Conversations on social media reveal valuable information for decision-makers. Leaders have a new tool to capture and analyse posts and tweets -and keep what they find private. Sc2 Corp created the Human Terrain Analysis System. It is an application to analyse social media communication that uses Luciad technology to map where conversations occur and plot when they happened. The system also analyses what people are saying. All of this takes place in an appliance purchased by the user, not in the cloud. To monitor social media, Sc2 Corp needed to be able to analyse data in 40 languages and examine linguistics of the posts and tweets. They also wanted to be able to map and plot the times of conversations and partnered with IBM and Luciad to design the system. Users can choose to see mapped data two or three dimensionally. A concert promoter for example, can see where people are talking about particular musicians. Or retailers can gauge customer interest in particular products in their neighbourhood, etc. The application can analyse the same amount of data in half a day that it would take 20 people to analyse in a full day, and is therefore becoming popular among governments, insurance companies, investment agencies and marketing groups.

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)