Urban Energy Modelling

Semantic 3D City Data as Virtual and Augmented Reality

The visualisation of results from urban energy modelling and simulation is a crucial part of energy research as it is the main communication tool among scientists, engineers and decision-makers. Energy modelling and simulation results are directly linked to spatial objects and 3D therefore becomes a necessity. Multiple interactive 3D visualisation environments exist, such as Esri’s City Engine or CESIUM web globe. However, these applications are mainly targeted as 3D viewers for use on desktop machines and lack immersive components that enable users to truly immerse themselves, explore and interact with the real environment on-site.

In this study, the team focused on a holistic approach that implements a seamless transition from a traditional virtual map view to virtual reality (VR) and augmented reality (AR) modes in a single mobile application. This is particularly of interest to experts and decision-makers as it provides them with means to explore results on-site through AR or VR. These two visualisation techniques have been combined with traditional maps for a better overview and strategic planning capabilities.

Multimodal visualisation

The multimodal application that has been implemented utilises the Glob3 Mobile framework (Santana et al., 2017). This software development kit (SDK), presented in Suárez et al. (2015), is a mobile-oriented framework for the development of map and 3D globe applications, being highly configurable on the user-navigation and level-of-detail strategies. Thus, the framework is suitable for the present research, having recently demonstrated the possibilities that mobile devices offer for the planning of complex infrastructures and large datasets.

Data infrastructure requirements

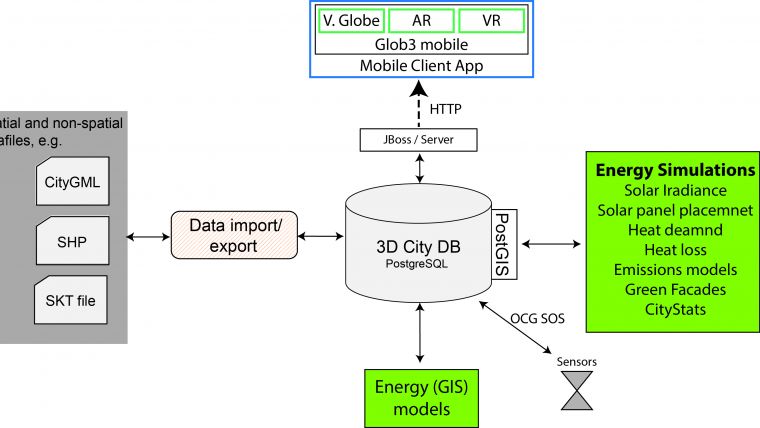

Given the complex nature of multidisciplinary energy-related modelling, datasets commonly differ in spatial and temporal resolution, data structure or storage format that requires an intensive data integration workload. Therefore, in order to maintain flexibility, all datasets are stored in an open-source data infrastructure that is based on a PostgreSQL database and PostGIS, for spatial capabilities. A major requirement of the mobile prototype is its connectivity to a PostgreSQL database and the CityGML data structure in which building information and energy models are stored. A benefit of using the CityGML standard instead of other geospatial data formats is that semantic information about each surface or element of a building can be stored. In addition, object-modelling specifications include different levels of detail (LoDs) (Döllner et al., 2006). Furthermore, the concept of LoDs can be used not only for semantic object abstraction but also for cartographic visualisation purposes. For efficient central data storage of information from diverse energy models and spatial analysis tools, the 3DCityDB – a simplified CityGML database – is used (see Figure 1).

Glob3 Mobile API

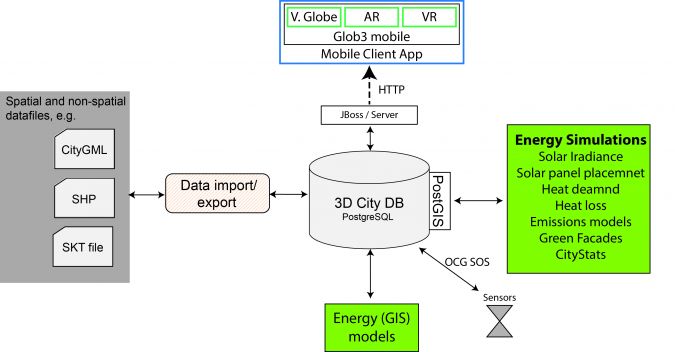

The Glob3 Mobile (G3M) API allows the generation of map applications in 2D, 2.5D and 3D following a zero third-party dependencies approach, and provides native performance on its three target platforms (iOS, Android and HTML5). 3D graphics are supported by the Khronos Group APIs, OpenGL ES 2.0 on portable devices and WebGL (web counterpart of OpenGL) on the HTML5 version. Features of this framework include multi-LoD 3D rendering and automatic shading of objects. Due to the multiplatform nature of the G3M API, portability to Android or HTML5 is possible (Figure 2).

A major part of this work was dedicated to the development and integration of new features for supporting seamless VR and AR modes to the G3M application core. From the perspective of the hardware, the location of the device is determined via the GPS system, whereas the orientation of the camera is obtained by processing the readings of the embedded accelerometers.

The different platforms on which G3M runs represent the camera attitude in different ways. These new features, now added to the G3M repository, are aimed at achieving a common description of the device positioning so that VR and AR functionalities and applications could be developed in a multiplatform fashion.

For the AR implementation, a careful mathematic modelling of the camera projection was carried out. In this case, the OpenCV library was used to determine how this projection was applied to an object of reference, determining the internal parameters of the device’s camera.

Application Design

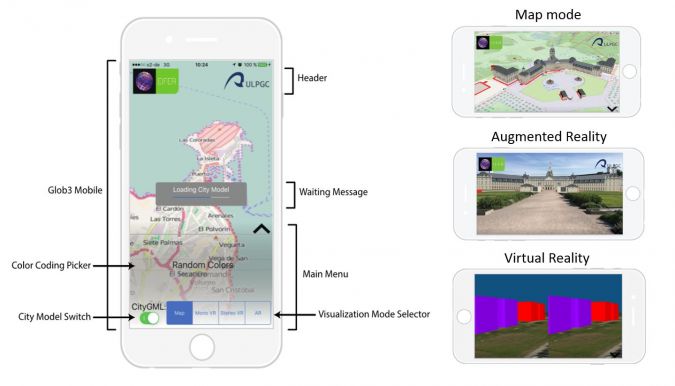

As a proof of concept, a first implementation of the application has been developed and tested on iOS, making it compatible with any iPhone or iPad running versions of iOS 9.0 or newer (Figure 3).

The app is divided into the following viewing modes:

- Classic Map mode, which offers an aerial view of the whole dataset. The user can navigate by using touch commands, exploring the city model in different visualisations and selecting the city structures to manually inspect the assets.

- Mono VR mode, which allows VR visualisation of the urban model in situ by holding the mobile device without the use of a VR headset.

- Stereo VR mode, which implements stereographic rendering. The app must be used inside a VR headset to experience a depth-enriched visualisation.

- AR mode, in which the images captured by the device’s camera are merged with the 3D-rendered scenario. This enhances the elements present in the scene with contextual information.

Use cases for multimodal visualisation in the energy domain

Three different case studies have been applied as proof of concepts for the developed app. In the first use case, the researchers demonstrated the visualisation of energy efficiency values generated by energy models developed in EIFER on LoD1 and LoD2 CityGML building models in Karlsruhe, Germany. The user is able to interactively explore values such as heat demand and CO2 emissions or energetic properties such as wall-to-surface ratios directly on-site as AR through the lens of the smartphone (Figure 4, left). As a second use case, the visualisation of underground infrastructure such as pipes, electrical wires or telecommunication lines was demonstrated. The underground structures shown in (Figure 4, right) are directly visualised from CityGML. The CityGML Utility Network ADE was used to store and visualise semantic properties such as diameter or flow rate of the pipe directly in CityGML.

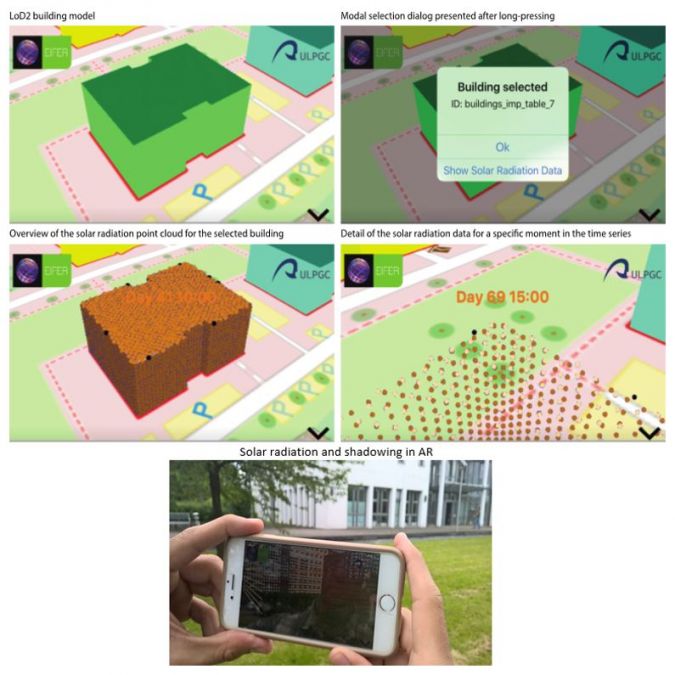

The third use case shows the capability of dynamic multimodal data visualisation. Outputs from a vertical solar radiation energy model have been implemented. The solar radiation data model consists of massive sets of points associated to a time series that describe the intensity of the received sunlight at a 1m resolution (Wieland et al., 2015). This form of visualisation is particularly useful in AR mode, as the user can explore solar gains and façade shadowing of potential solar installations as animations on-site. In order to visualise and animate the time series data, the user selects the building of interest. Once the selection has been made, the user is prompted with a command that triggers the visualisation of the point cloud associated with the selected building (Figure 5). To display this solar radiation model, the geometry model of the building becomes invisible and is replaced by a point cloud. The vertices of such point clouds are stored server-side and retrieved by the client on demand. With the geometry in place, a periodical task scans the data series converting the radiation values into colours using a linear interpolation.

Conclusion and Outlook

The usage of VR and AR for the exploration of urban models and multiple energy simulation outputs has been successfully demonstrated. Likewise, the VR modes have been tested for energy planning purposes where users could explore the heat energy loss and gains model in an immersive environment. However, as only an LoD2 CityGML model was used in this proof of concept, a higher and more detailed abstraction level is necessary in future versions for a more realistic display.

The AR mode was successfully tested for the dynamic visualisation of point clouds generated by a vertical solar radiation model (SolarB). The user was able to select a building and visualise solar gains and the shadowing effect of neighbouring buildings as animations that blend with the environment. This use case sparked great interest, as it is a visual way to communicate the benefits and drawbacks of potential future solar panel installations. Furthermore, the seamless switch from map mode to VR or AR mode was beneficial for users when exploring the study site in the real environment. Without changing from one device or application to another, the user could rapidly change the data visualisation perspective and in consequence could more efficiently analyse the data.

Currently, only GPS and inertial measurements of the smartphone are used for positioning. However, as higher precision is sometimes required (e.g. for underground structures), differential positioning technologies such as the inclusion of Wi-Fi fingerprinting or Bluetooth localisation or the use of visual landmarks are being evaluated.

Value staying current with geomatics?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories to help you learn, grow, and reach your full potential in your field. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired.

Choose your newsletter(s)